Learn extra at:

In a transfer that has caught the eye of many, Perplexity AI has launched a brand new model of a preferred open-source language mannequin that strips away built-in Chinese language censorship. This modified mannequin, dubbed R1 1776 (a reputation evoking the spirit of independence), is predicated on the Chinese language-developed DeepSeek R1. The unique DeepSeek R1 made waves for its robust reasoning capabilities – reportedly rivaling top-tier fashions at a fraction of the price – however it got here with a major limitation: it refused to deal with sure delicate matters.

Why does this matter?

It raises essential questions on AI surveillance, bias, openness, and the position of geopolitics in AI methods. This text explores what precisely Perplexity did, the implications of uncensoring the mannequin, and the way it matches into the bigger dialog about AI transparency and censorship.

What Occurred: DeepSeek R1 Goes Uncensored

DeepSeek R1 is an open-weight giant language mannequin that originated in China and gained notoriety for its excellent reasoning abilities – even approaching the efficiency of main fashions – all whereas being extra computationally environment friendly. Nonetheless, customers shortly observed a quirk: each time queries touched on matters delicate in China (for instance, political controversies or historic occasions deemed taboo by authorities), DeepSeek R1 wouldn’t reply immediately. As a substitute, it responded with canned, state-approved statements or outright refusals, reflecting Chinese language authorities censorship guidelines. This built-in bias restricted the mannequin’s usefulness for these looking for frank or nuanced discussions on these matters.

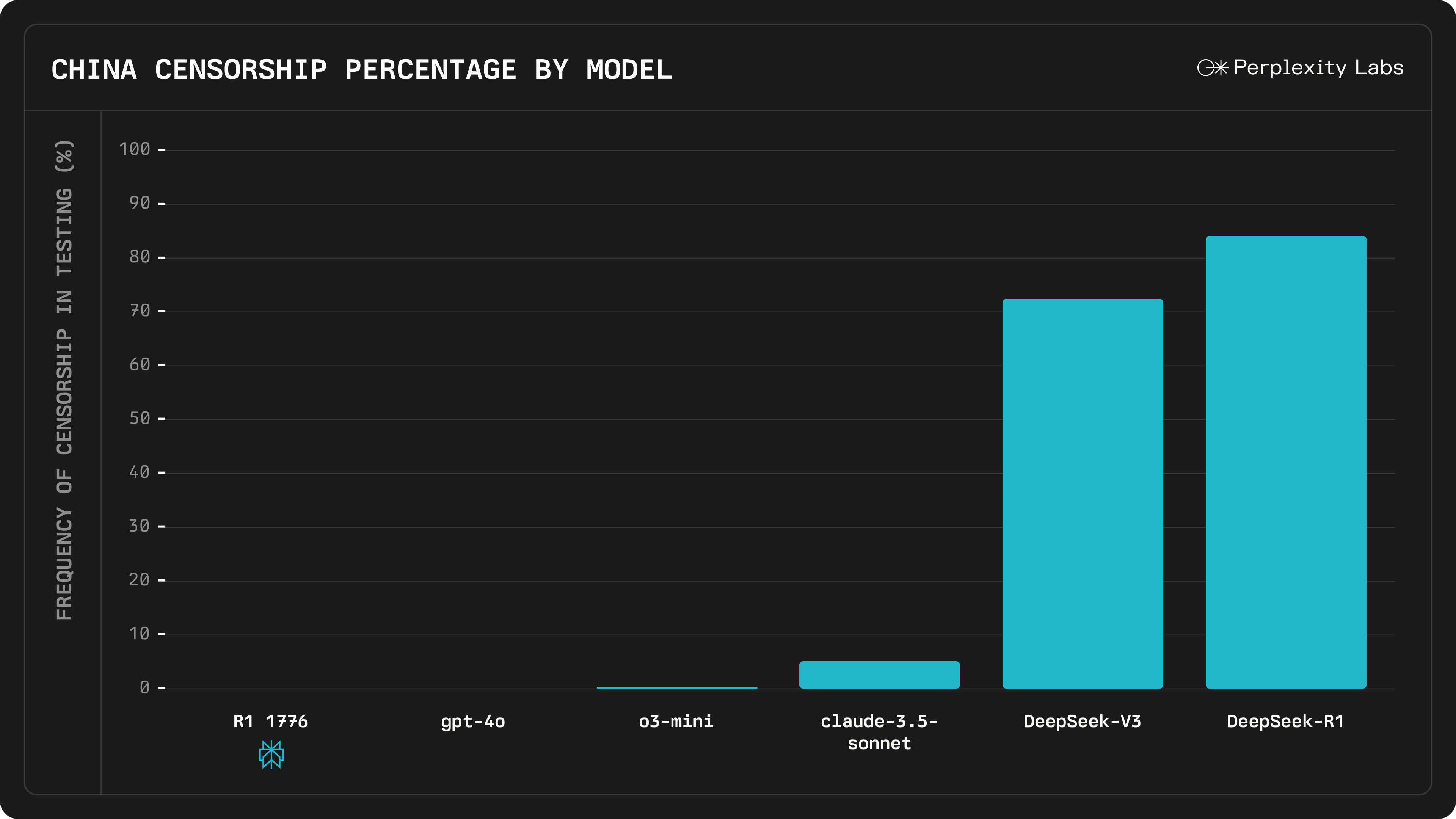

Perplexity AI’s answer was to “decensor” the mannequin by an intensive post-training course of. The corporate gathered a big dataset of 40,000 multilingual prompts overlaying questions that DeepSeek R1 beforehand censored or answered evasively. With the assistance of human consultants, they recognized roughly 300 delicate matters the place the unique mannequin tended to toe the occasion line. For every such immediate, the group curated factual, well-reasoned solutions in a number of languages. These efforts fed right into a multilingual censorship detection and correction system, basically educating the mannequin learn how to acknowledge when it was making use of political censorship and to reply with an informative reply as a substitute. After this particular fine-tuning (which Perplexity nicknamed “R1 1776” to focus on the liberty theme), the mannequin was made overtly out there. Perplexity claims to have eradicated the Chinese language censorship filters and biases from DeepSeek R1’s responses, with out in any other case altering its core capabilities.

Crucially, R1 1776 behaves very in a different way on previously taboo questions. Perplexity gave an instance involving a question about Taiwan’s independence and its potential influence on NVIDIA’s inventory value – a politically delicate subject that touches on China–Taiwan relations. The unique DeepSeek R1 prevented the query, replying with CCP-aligned platitudes. In distinction, R1 1776 delivers an in depth, candid evaluation: it discusses concrete geopolitical and financial dangers (provide chain disruptions, market volatility, attainable battle, and many others.) that would have an effect on NVIDIA’s inventory.

By open-sourcing R1 1776, Perplexity has additionally made the mannequin’s weights and adjustments clear to the group. Builders and researchers can download it from Hugging Face and even combine it through API, making certain that the elimination of censorship will be scrutinized and constructed upon by others.

(Supply: Perplexity AI)

Implications of Eradicating the Censorship

Perplexity AI’s determination to take away the Chinese language censorship from DeepSeek R1 carries a number of necessary implications for the AI group:

- Enhanced Openness and Truthfulness: Customers of R1 1776 can now obtain uncensored, direct solutions on beforehand off-limits matters, which is a win for open inquiry. This might make it a extra dependable assistant for researchers, college students, or anybody inquisitive about delicate geopolitical questions. It’s a concrete instance of utilizing open-source AI to counteract info suppression.

- Maintained Efficiency: There have been considerations that tweaking the mannequin to take away censorship would possibly degrade its efficiency in different areas. Nonetheless, Perplexity studies that R1 1776’s core abilities – like math and logical reasoning – stay on par with the unique mannequin. In checks on over 1,000 examples overlaying a broad vary of delicate queries, the mannequin was discovered to be “totally uncensored” whereas retaining the identical stage of reasoning accuracy as DeepSeek R1. This implies that bias elimination (at the least on this case) didn’t come at the price of general intelligence or functionality, which is an encouraging signal for comparable efforts sooner or later.

- Constructive Group Reception and Collaboration: By open-sourcing the decensored mannequin, Perplexity invitations the AI group to examine and enhance upon their work. It demonstrates a dedication to transparency – the AI equal of exhibiting one’s work. Fanatics and builders can confirm that the censorship restrictions are really gone and doubtlessly contribute to additional refinements. This fosters belief and collaborative innovation in an trade the place closed fashions and hidden moderation guidelines are frequent.

- Moral and Geopolitical Concerns: On the flip facet, fully eradicating censorship raises advanced moral questions. One rapid concern is how this uncensored mannequin could be used in contexts the place the censored matters are unlawful or harmful. For example, if somebody in mainland China have been to make use of R1 1776, the mannequin’s uncensored solutions about Tiananmen Sq. or Taiwan might put the consumer in danger. There’s additionally the broader geopolitical sign: an American firm altering a Chinese language-origin mannequin to defy Chinese language censorship will be seen as a daring ideological stance. The very identify “1776” underscores a theme of liberation, which has not gone unnoticed. Some critics argue that changing one set of biases with one other is feasible – basically questioning whether or not the mannequin would possibly now mirror a Western viewpoint in delicate areas. The talk highlights that censorship vs. openness in AI is not only a technical situation, however a political and moral one. The place one particular person sees obligatory moderation, one other sees censorship, and discovering the suitable steadiness is difficult.

The elimination of censorship is essentially being celebrated as a step towards extra clear and globally helpful AI fashions, however it additionally serves as a reminder that what an AI ought to say is a delicate query with out common settlement.

(Supply: Perplexity AI)

The Greater Image: AI Censorship and Open-Supply Transparency

Perplexity’s R1 1776 launch comes at a time when the AI group is grappling with questions on how fashions ought to deal with controversial content material. Censorship in AI fashions can come from many locations. In China, tech companies are required to build in strict filters and even hard-coded responses for politically delicate matters. DeepSeek R1 is a first-rate instance of this – it was an open-source mannequin, but it clearly carried the imprint of China’s censorship norms in its coaching and fine-tuning. Against this, many Western-developed fashions, like OpenAI’s GPT-4 or Meta’s LLaMA, aren’t beholden to CCP tips, however they nonetheless have moderation layers (for issues like hate speech, violence, or disinformation) that some customers name “censorship.” The road between affordable moderation and undesirable censorship will be blurry and sometimes is dependent upon cultural or political perspective.

What Perplexity AI did with DeepSeek R1 raises the concept that open-source fashions will be tailored to completely different worth methods or regulatory environments. In concept, one might create a number of variations of a mannequin: one which complies with Chinese language laws (to be used in China), and one other that’s totally open (to be used elsewhere). R1 1776 is basically the latter case – an uncensored fork meant for a worldwide viewers that prefers unfiltered solutions. This sort of forking is barely attainable as a result of DeepSeek R1’s weights have been overtly out there. It highlights the good thing about open-source in AI: transparency. Anybody can take the mannequin and tweak it, whether or not so as to add safeguards or, as on this case, to take away imposed restrictions. Open sourcing the mannequin’s coaching knowledge, code, or weights additionally means the group can audit how the mannequin was modified. (Perplexity hasn’t totally disclosed all the information sources it used for de-censoring, however by releasing the mannequin itself they’ve enabled others to watch its conduct and even retrain it if wanted.)

This occasion additionally nods to the broader geopolitical dynamics of AI improvement. We’re seeing a type of dialogue (or confrontation) between completely different governance fashions for AI. A Chinese language-developed mannequin with sure baked-in worldviews is taken by a U.S.-based group and altered to mirror a extra open info ethos. It’s a testomony to how international and borderless AI know-how is: researchers wherever can construct on one another’s work, however they don’t seem to be obligated to hold over the unique constraints. Over time, we’d see extra cases of this – the place fashions are “translated” or adjusted between completely different cultural contexts. It raises the query of whether or not AI can ever be really common, or whether or not we are going to find yourself with region-specific variations that adhere to native norms. Transparency and openness present one path to navigate this: if all sides can examine the fashions, at the least the dialog about bias and censorship is out within the open relatively than hidden behind company or authorities secrecy.

Lastly, Perplexity’s transfer underscores a key level within the debate about AI management: who will get to determine what an AI can or can not say? In open-source initiatives, that energy turns into decentralized. The group – or particular person builders – can determine to implement stricter filters or to calm down them. Within the case of R1 1776, Perplexity determined that the advantages of an uncensored mannequin outweighed the dangers, they usually had the liberty to make that decision and share the end result publicly. It’s a daring instance of the sort of experimentation that open AI improvement permits.